The Shift Happening Right Now

Google still processes roughly 8.5 billion searches per day. That number hasn’t collapsed. But the way people use those results — and the alternatives they now reach for — has changed in ways that matter more than traffic volume alone.

ChatGPT surpassed 200 million weekly active users by early 2025, a number that has continued to grow through 2026. Perplexity reported over 15 million monthly users, skewing heavily toward professionals and researchers who make purchasing decisions. Google’s own AI Overviews are now triggered on an estimated 15–30% of queries, depending on the vertical. These are not projections or estimates from optimistic AI startups. These are usage numbers reported by the platforms themselves or tracked by independent researchers.

Here is what changed structurally: in traditional search, even a poor result had visibility. A listing on page two of Google was bad, but it was something. A user who scrolled could still find you. In AI-generated answers, there is no scrolling. The model assembles an answer from its training data and, in many cases, from real-time web retrieval. It cites a handful of sources — typically three to eight. Everything else is invisible. Not ranked low. Invisible.

This creates a winner-take-most dynamic that is more extreme than anything traditional search produced. When someone asks ChatGPT “what are the best website design agencies for e-commerce,” the model names five or six. Maybe it cites their websites, maybe it doesn’t. But the hundred other agencies that would have appeared across ten pages of Google results? They don’t exist in that interaction.

The behavioral shift matters too. Users asking AI for recommendations tend to trust the answer more than a search result listing. A search result is understood as an advertisement or an algorithm’s best guess. An AI recommendation feels like advice from a knowledgeable assistant. Research from the Nielsen Norman Group has shown that users perceive AI-generated answers as more authoritative than equivalent information found through manual search, even when the underlying sources are identical.

For brands, this creates two distinct problems. The first is discovery: if AI doesn’t mention you, a growing segment of potential customers will never encounter your brand during their research phase. The second is framing: when AI does mention you, the way it describes your brand — the words it uses, the competitors it places you alongside, the strengths it attributes to you — becomes a form of brand narrative that you didn’t write and may not control.

Both problems are solvable. But solving them requires understanding a system that works very differently from the search engine optimization most businesses are familiar with.

The Terminology Mess: AEO, GEO, LLMO — What These Actually Mean

Search any marketing forum for advice on AI search optimization and you will immediately encounter a wall of acronyms: AEO, GEO, LLMO, AI SEO, GAIO, AIO. Some of these describe meaningfully different approaches. Most of them describe the same set of activities with different branding.

AEO — Answer Engine Optimization

AEO emerged first, around 2023–2024, as a way to describe optimization for platforms that deliver direct answers rather than links. The original framing included voice assistants (Alexa, Siri) and Google’s Featured Snippets, but the term has evolved to encompass ChatGPT, Perplexity, and other AI chat interfaces. The core idea: structure your content so AI systems can extract clean, direct answers from it. This includes FAQ formatting, concise definition paragraphs, comparison tables with real data, and schema markup that makes your content machine-readable.

GEO — Generative Engine Optimization

GEO comes from a 2023 research paper by a team at Georgia Tech, Princeton, the Allen Institute, and IIT Delhi. The paper, “GEO: Generative Engine Optimization,” proposed a formal framework for optimizing content to improve visibility in AI-generated responses. Their key finding: adding citations, statistics, and quotations from authoritative sources significantly increased a page’s likelihood of being referenced by generative AI. The paper gave the field academic grounding and a specific vocabulary, but the tactics it identified — cite your sources, include data, reference experts — are extensions of principles that good journalism and technical writing have followed for decades.

LLMO — Large Language Model Optimization

LLMO focuses specifically on influencing what large language models say about your brand, even when they aren’t browsing the web. This is the most misunderstood of the three because it addresses training data — the information a model absorbed during its training phase — which is fundamentally different from real-time search optimization. You cannot “optimize” for training data retroactively; that data is already frozen. What you can do is build the kind of web presence that ensures future training data updates include strong, consistent signals about your brand.

Where They Overlap, and Why the Naming Doesn’t Matter Much

A common Reddit question is: “Is AEO different from SEO?” The honest answer is that roughly 70% of what constitutes good AI search optimization is indistinguishable from good SEO executed with modern search behavior in mind. Proper semantic HTML, authoritative content, strong entity signals, fast-loading pages, schema markup — these help you in Google’s traditional results, in AI Overviews, in Perplexity citations, and in ChatGPT recommendations. The remaining 30% involves strategies specific to AI: entity consistency across platforms, passage-level optimization for citation, training data influence, and platform-specific technical considerations like Bing index optimization for ChatGPT.

The acronym you use doesn’t change the work. What matters is understanding the mechanisms — and most of the advice floating around conflates mechanisms that work very differently.

How AI Decides Who to Recommend

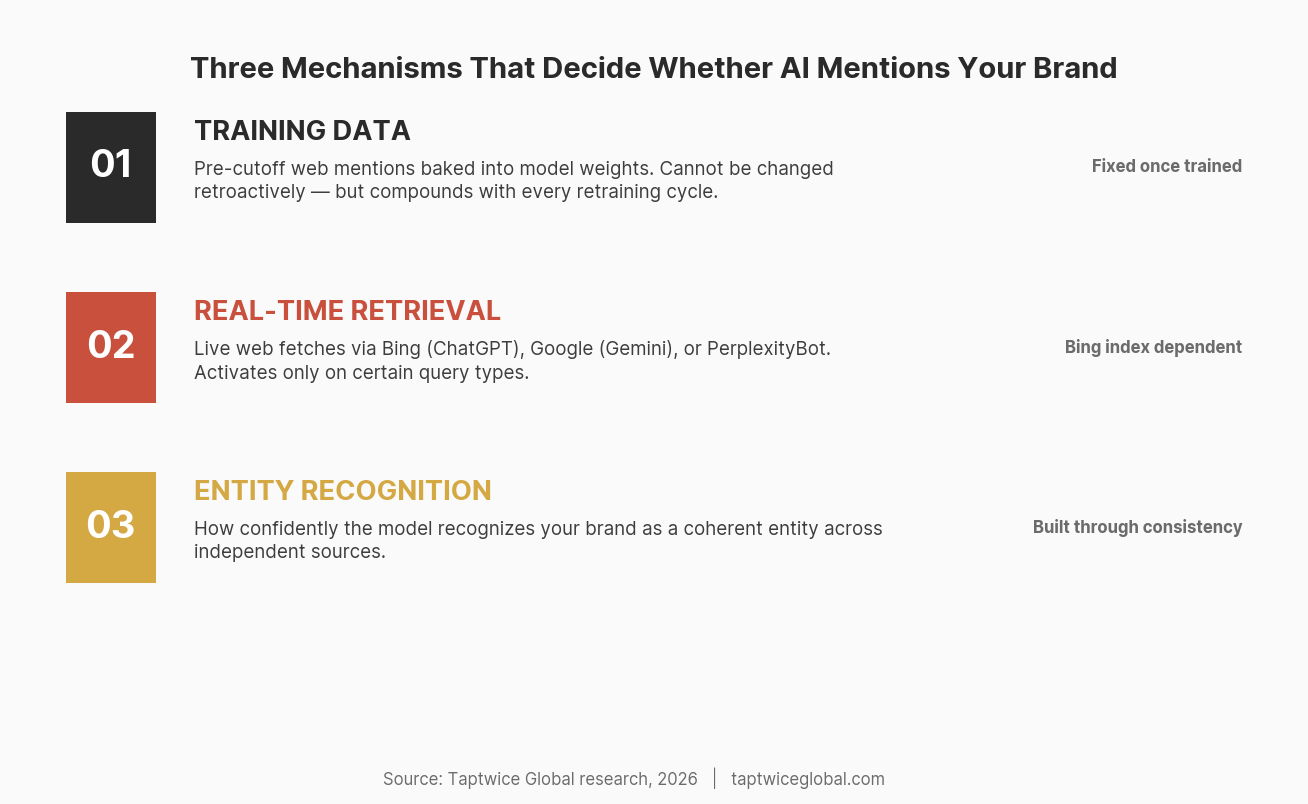

This is where most articles on AI search optimization fail their readers. They describe tactics without explaining the underlying systems. There are three distinct mechanisms that determine whether your brand appears in AI-generated answers, and each requires a different approach.

Mechanism 1: Training Data

Every large language model is trained on a massive corpus of text scraped from the internet, books, academic papers, and other sources. GPT-4’s training data has a cutoff; Claude’s has a cutoff; Gemini’s has a cutoff. Whatever information existed in publicly accessible text before that cutoff date is baked into the model’s weights. This is why ChatGPT can recommend well-known brands even with web search disabled: it learned about them during training.

You cannot retroactively change training data. If your brand was barely present on the web when training data was collected, the model may not know you exist — regardless of your current Google rankings. However, models are retrained periodically, and future training runs will capture your current web presence. This is why building a strong, consistent web footprint now has compounding returns: it influences not just live search but future model knowledge.

What the model learned during training is also influenced by frequency and context. A brand mentioned across hundreds of independent sources in consistent contexts (e.g., “Agency X specializes in e-commerce website design”) creates a stronger internal representation than a brand mentioned only on its own website. This is entity formation — the process by which a model builds an internal concept of what your brand is, what it does, and how it relates to other entities in its knowledge.

Mechanism 2: Real-Time Web Retrieval

When ChatGPT browses the web, it uses Bing’s search index. When Perplexity answers a query, it uses its own crawler (PerplexityBot) combined with Bing results. When Google generates AI Overviews, it draws from Google’s own search index. When Google’s AI Mode operates, it blends Gemini’s capabilities with Google Search infrastructure.

This means real-time AI responses are heavily influenced by existing search infrastructure. If your site is not indexed by Bing, ChatGPT with web browsing literally cannot find you. We have seen this pattern repeatedly: brands with strong Google visibility but no Bing Webmaster Tools setup that are completely absent from ChatGPT’s web-browsing responses.

The retrieval mechanism also matters for citation. AI systems that browse the web tend to cite the pages they retrieve, and the selection of which pages to retrieve follows different rules than traditional search ranking. Perplexity, for example, favors pages with clear, structured answers — well-formatted FAQ sections, comparison tables, step-by-step guides — over pages that might rank well on Google but contain the information buried in long-form narrative text. The model is looking for extractable answers, not the best web page.

Mechanism 3: Entity Recognition

This is the least discussed and arguably most important mechanism. AI models build internal representations of entities — brands, people, products, organizations. The strength of your entity representation determines how readily the model thinks of you when answering a relevant query.

Entity strength is built through what we call signal consistency: your brand information appearing the same way across multiple independent sources. Your company name, founding date, service descriptions, leadership team, headquarters location, and service areas should be consistent across your website, LinkedIn, Crunchbase, industry directories, Wikipedia (if notable enough), press coverage, and any other authoritative source. When AI encounters the same entity described consistently across dozens of independent contexts, it builds a strong, confident internal representation.

Fragmented signals do the opposite. If your LinkedIn says “digital marketing agency,” your website says “growth consultancy,” and Clutch lists you as “web development firm,” the model has three weak, possibly unconnected entities instead of one strong one. We work extensively on entity SEO for this reason — it is the foundation that makes every other AI search optimization effort effective.

The Insight Most Advice Misses

Training data presence and real-time retrieval are fundamentally different channels, and most AI search optimization advice conflates them. Tactics that improve your chances of being retrieved during a live web search (structured content, Bing indexing, FAQ formatting) do nothing for what the model already knows from training. Tactics that build entity strength (consistent cross-platform presence, authoritative mentions, Wikipedia notability) affect training data influence but may not change real-time retrieval at all.

Effective AI search optimization works both channels simultaneously, but recognizes which signals affect which mechanism.

Why Ranking #1 on Google Doesn’t Mean AI Knows You Exist

This is the pain point we see most frequently in forums. The exact Reddit post: “why does perplexity/gemini ignore my site even though we rank on google??” It’s a legitimate frustration, and the answer isn’t simple.

Different Indexes, Different Selection Criteria

Google’s search algorithm and Perplexity’s source selection algorithm are different systems optimized for different goals. Google ranks pages. Perplexity selects passages. Google considers hundreds of ranking signals accumulated over decades — backlinks, page authority, user engagement signals, Core Web Vitals. Perplexity looks for the passage that most directly and clearly answers the query, from a source it deems credible.

A page can rank #1 on Google for “best CRM for small business” because it has strong domain authority, excellent backlinks, and high engagement metrics, while containing the actual answer buried in paragraph 47 behind a newsletter signup wall. Perplexity will skip that page in favor of a lower-ranking page that has a clean comparison table in the first 500 words.

ChatGPT with web browsing faces a different constraint: it searches Bing, not Google. The Bing index and Google index overlap substantially, but they are not identical. Sites that have never submitted to Bing Webmaster Tools, that have technical issues preventing Bing’s crawler from accessing their content, or that simply haven’t built authority signals that Bing recognizes will be invisible to ChatGPT’s web search, regardless of their Google rankings.

Entity Fragmentation

The second reason Google rankings don’t translate to AI visibility is entity fragmentation. Google doesn’t need a coherent understanding of your brand entity to rank your pages — it ranks pages, not brands. AI systems, however, recommend brands. They need to understand what your brand is, what it does, and why it’s relevant to the query.

If your brand information is scattered across the web in inconsistent forms — different company descriptions on every platform, outdated information on industry directories, a LinkedIn page that hasn’t been updated in three years, a Crunchbase profile with wrong employee count — the AI model’s internal representation of your brand is weak or conflicted. It may know your brand exists but lack confidence in what you actually do, which means it won’t recommend you for specific queries.

We audited a B2B SaaS company that ranked in the top three on Google for seven high-value keywords. Their ChatGPT visibility was nearly zero. The cause: they had three different brand descriptions across their website, LinkedIn, and G2, two different founding years listed online, and their CEO’s LinkedIn title didn’t match the company’s About page. After consolidating these signals and publishing consistent information across twelve platforms, their ChatGPT mention rate for branded and category queries improved substantially within one training cycle.

Bing Matters More Than You Think

This deserves its own emphasis. ChatGPT’s web search runs on Bing. Microsoft Copilot runs on Bing. These are two of the largest AI interfaces in the world. If your Bing optimization is an afterthought — or nonexistent — you are invisible to a massive channel.

Practical steps that take under an hour: verify your site in Bing Webmaster Tools, submit your sitemap, check your Bing crawl stats for errors, and review your IndexNow implementation (Bing supports IndexNow for instant URL submission). These are low-effort, high-impact actions that most SEO professionals have been ignoring for years because Bing’s direct search traffic was negligible. With ChatGPT and Copilot in the picture, that calculus has changed entirely.

Where the Experts Disagree — and What That Means for You

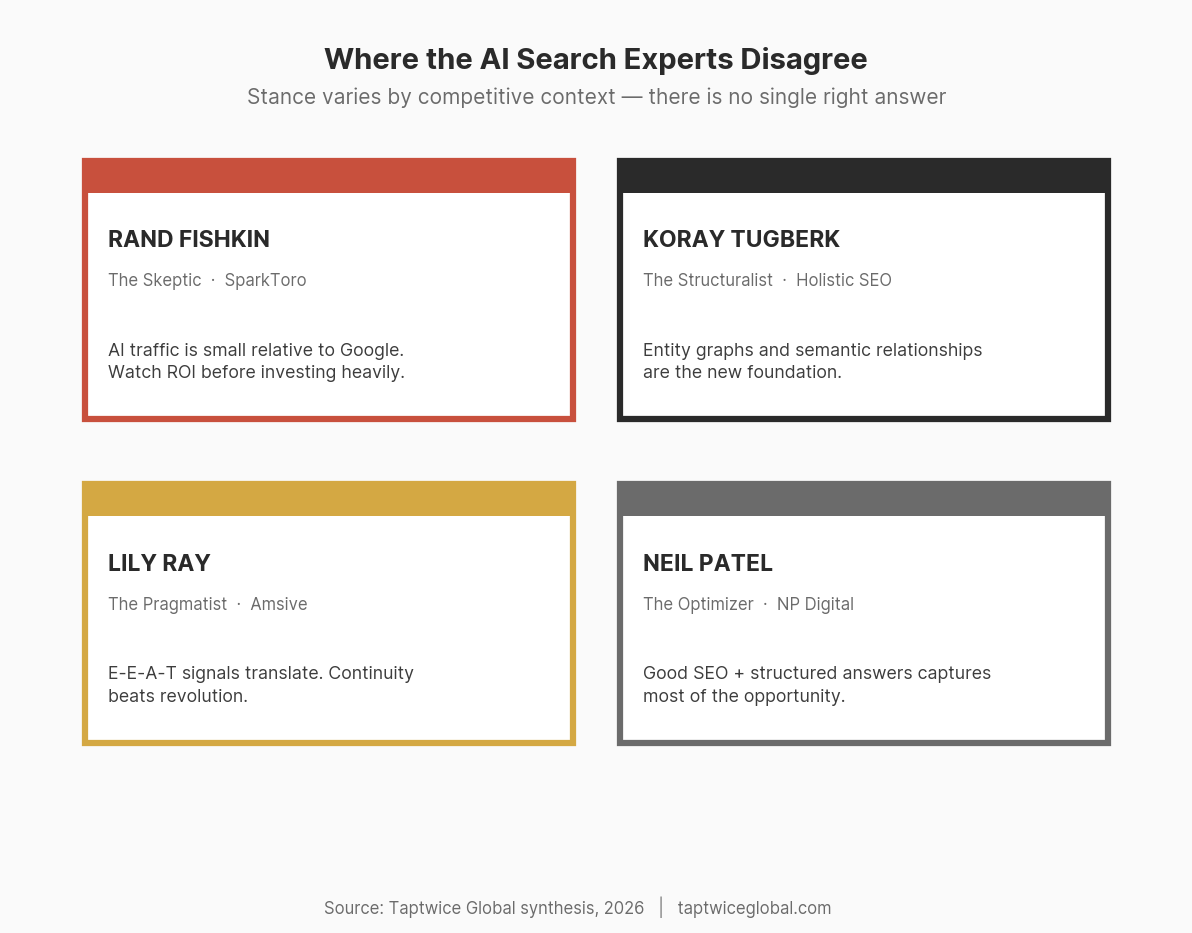

AI search optimization is a young discipline, and smart people have fundamentally different views on its importance, its mechanics, and its future. Pretending there’s consensus would be dishonest. Here’s the landscape of informed opinion.

The Skeptic: Rand Fishkin

Rand Fishkin, founder of SparkToro, has been the most prominent voice questioning the hype around AI search optimization. His argument: the share of total search volume going through AI platforms is still small relative to Google. The zero-click trend — where users get answers without visiting any website — means that even if AI mentions your brand, it may not drive meaningful traffic. He warns that businesses are being sold expensive “AEO services” based on speculative future behavior rather than current measurable impact.

Fishkin’s skepticism is worth taking seriously. He was right about the zero-click trend before most of the industry acknowledged it. His caution about ROI measurement in AI search is legitimate — the measurement infrastructure genuinely doesn’t exist yet in mature form. Where his analysis may underweight the risk is on the brand-formation side: even if AI search doesn’t drive direct clicks today, being excluded from AI recommendations shapes brand perception in ways that compound over time.

The Structuralist: Koray Tugberk

Koray Tugberk of Holistic SEO has positioned entity optimization and semantic SEO as the foundation of AI visibility. His argument: AI systems understand topics through entities and their relationships, so building a strong entity graph — where your brand is clearly connected to the topics, services, and concepts it should be associated with — is fundamentally different from traditional keyword optimization. He sees this as a paradigm shift, not an incremental change.

Tugberk’s framework is intellectually rigorous and aligns with how transformer-based models actually process information. The critique is that his approach requires significant technical sophistication to implement and may overstate how precisely brands can control their entity representations in model training. The directional advice — build consistent, well-structured entity signals — is sound even if the granular semantic mapping he advocates is difficult to execute at scale.

The Pragmatist: Lily Ray

Lily Ray, SVP of SEO at Amsive, approaches AI search through the lens of Google’s E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness). Her position: the signals that build E-E-A-T for traditional search — author credentials, site reputation, accurate information, cited sources — also build the kind of authority that AI systems respect. She’s less interested in declaring a revolution and more focused on what the continuity between traditional SEO and AI optimization looks like in practice.

Ray’s approach is practical and immediately actionable. It’s also the easiest for existing SEO teams to implement because it builds on familiar concepts. The limitation is that E-E-A-T was designed as a quality assessment framework for human evaluators and Google’s algorithms — whether it maps cleanly to how Perplexity selects sources or how ChatGPT evaluates brand authority is an assumption, not a proven relationship.

The Optimizer: Neil Patel

Neil Patel’s position is the most accessible: do good SEO, structure your content to provide direct answers, and you’ll capture most of the AI search opportunity. He emphasizes practical tactics — FAQ sections, structured data, concise answer paragraphs — over theoretical frameworks. His message to most businesses: you don’t need a separate “AEO strategy.” You need better content strategy that accounts for how AI extracts answers.

Patel’s advice is correct for the majority of businesses. If your SEO fundamentals are weak, fixing those will do more for your AI visibility than any AI-specific tactic. Where his analysis is less useful is for competitive verticals where multiple players have strong SEO and the marginal factors that determine AI citation — entity strength, passage-level optimization, cross-platform presence — become the differentiators.

How to Think About the Disagreement

These perspectives aren’t randomly distributed. They map to a spectrum based on how competitive your market is and how much AI search affects your buyer’s journey:

- Low competition, simple buyer journey: Patel’s advice is sufficient. Fix your SEO basics, add structured answers, and move on.

- Moderate competition, considered purchases: Ray’s E-E-A-T framework plus basic entity consistency will put you ahead of most competitors.

- High competition, complex buyer journey: Tugberk’s entity-first approach becomes essential. Your competitors all have good SEO — the fight is over entity strength and authority signals.

- Emerging or uncertain market: Fishkin’s caution applies. Measure before you invest heavily, and don’t let an agency sell you transformation when the channel itself is still forming.

The right approach depends on where you sit. Blanket advice that ignores competitive context is the most common failure mode in AI search optimization content.

The Platforms That Matter and How They Differ

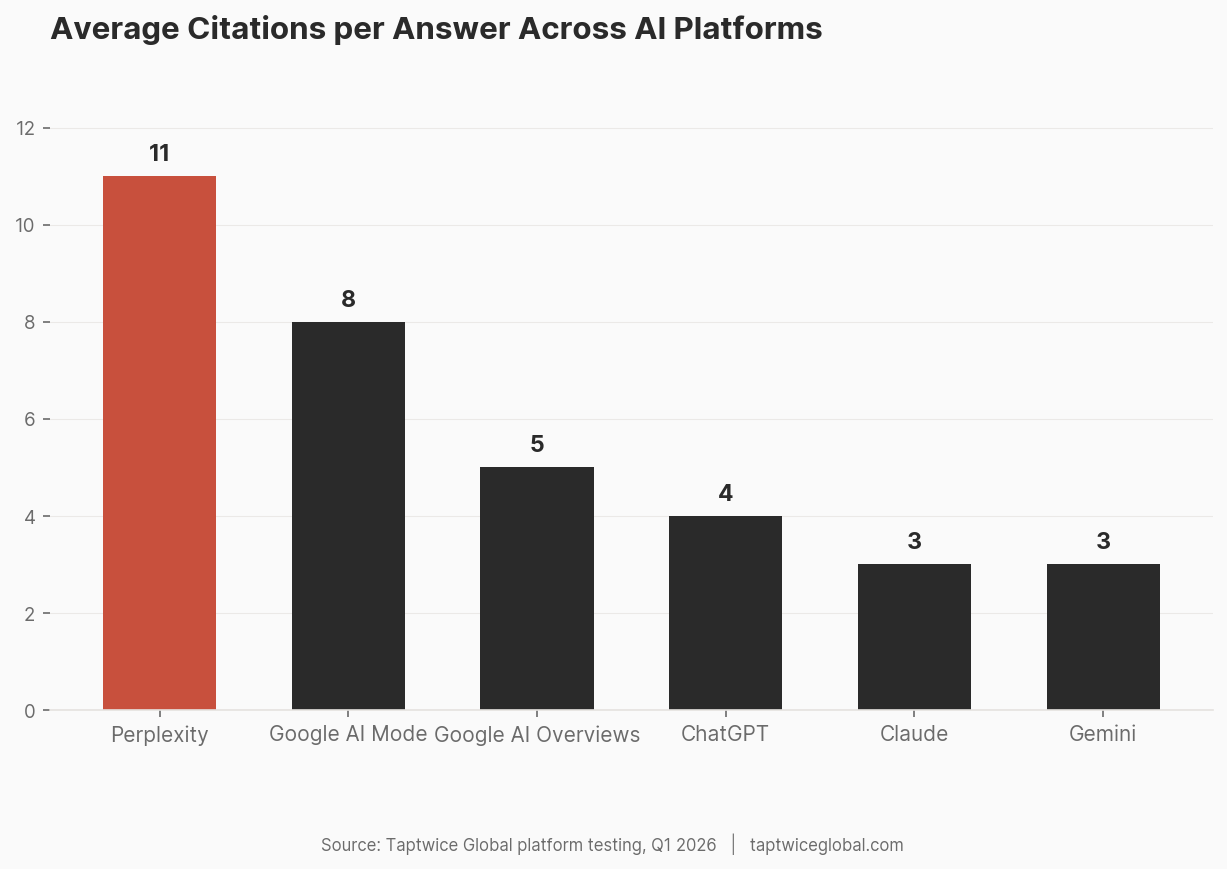

One of the most common mistakes in AI search optimization is treating “AI” as a monolithic platform. Each major AI system has different source selection mechanisms, different indexes, and different behavior. Optimizing for one does not guarantee visibility on the others.

ChatGPT (OpenAI)

With 200+ million weekly active users, ChatGPT is the highest-traffic AI platform. When web search is enabled, it queries Bing’s index. When operating from training data alone, it draws on information from its last training cutoff. ChatGPT tends to provide balanced, multi-option recommendations (naming several brands rather than one), and it increasingly provides citation links when browsing the web. Key factor: Bing indexing is a prerequisite. If Bing can’t crawl your site, ChatGPT’s web search can’t find you.

Google AI Overviews

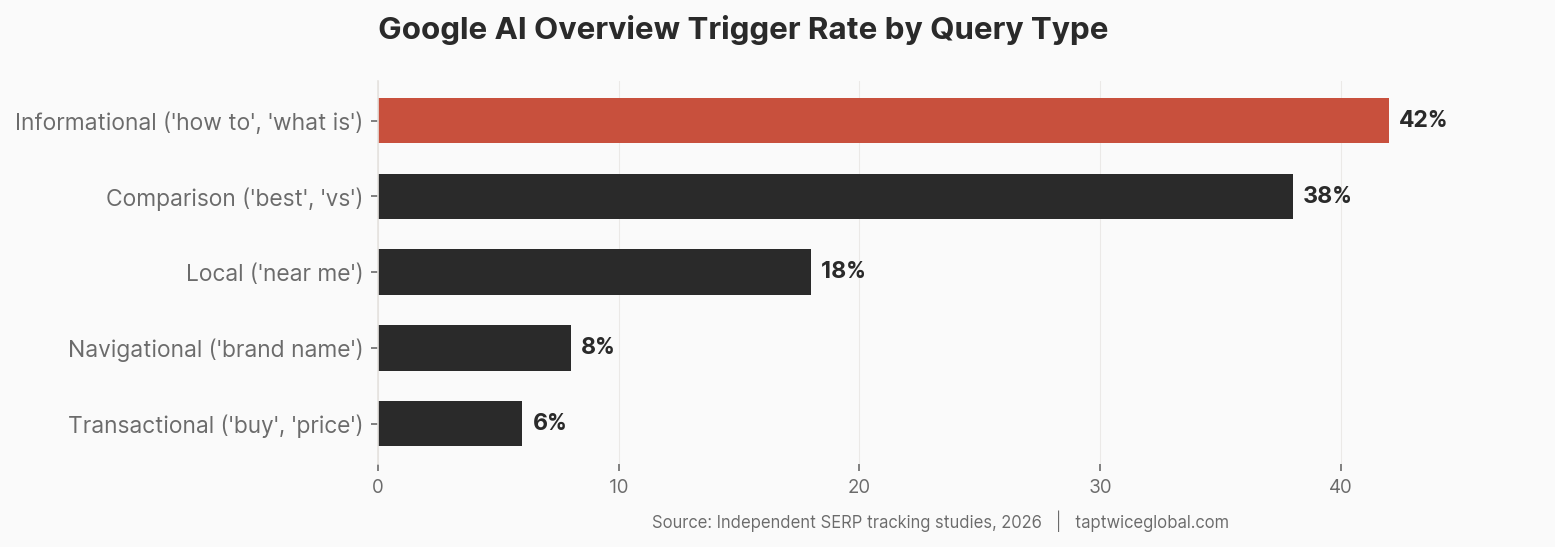

AI Overviews appear directly in Google search results, triggered on an estimated 15–30% of queries (higher in informational and comparison queries, lower in navigational and transactional). They draw from Google’s own search index, meaning your Google SEO work directly influences your AI Overview visibility. However, the selection criteria for AI Overview citations differ from organic ranking: Google’s AI tends to favor pages with clear, structured answers — definition paragraphs, numbered lists, comparison data — over pages optimized for traditional engagement metrics. A page ranking #8 with a perfect answer paragraph can be cited in the AI Overview above a page ranking #1.

Perplexity

Perplexity has carved out a niche with professionals and researchers. Its source selection is notably different from other platforms: it uses its own crawler (PerplexityBot) combined with Bing’s index, and it places heavy emphasis on recency and source diversity. Perplexity tends to cite more sources per answer than other platforms (often 8–15 citations) and skews toward pages with structured, data-rich content. If your site blocks PerplexityBot in robots.txt (some publishers do), you are invisible on this platform regardless of your content quality.

Google AI Mode and Gemini

Google’s AI Mode is an expanding feature that provides deeper, more conversational AI responses within Google Search. It uses Gemini’s capabilities combined with Google’s search infrastructure. Early observations suggest it cites a wider range of sources than standard AI Overviews and handles complex, multi-step queries more aggressively. Gemini as a standalone product is also growing but shares the same underlying capabilities. Key factor: Google Search index visibility remains the primary input.

Claude, Grok, and Meta AI

These platforms are growing in user base but have different optimization implications. Claude (Anthropic) relies heavily on training data and does not currently browse the web in the same integrated way ChatGPT does. Grok (xAI) integrates with X (Twitter) data and has real-time social media context that other models lack. Meta AI is embedded across Facebook, Instagram, and WhatsApp, giving it enormous reach but primarily for consumer queries.

For most businesses, the priority order is: Google AI Overviews (because the traffic volume dwarfs other platforms), ChatGPT (largest dedicated AI user base), Perplexity (highest-intent professional users), and then the remaining platforms based on your specific audience. Trying to optimize for all platforms simultaneously without prioritization leads to unfocused effort and poor results.

What Actually Moves the Needle

Based on our analysis of citation patterns across AI platforms — examining which sources get cited, how content is structured on cited pages versus non-cited competitors, and what entity signals correlate with recommendation — here is what consistently matters.

Entity Signals

Schema markup is the technical foundation. Specifically, Organization schema with detailed properties: name, description, foundingDate, founders, areaServed, services offered, sameAs links pointing to all official profiles (LinkedIn, Crunchbase, social media, directories). This gives AI systems a machine-readable entity card that anchors your brand information.

But schema alone is insufficient. The entity must be confirmed across independent sources. A brand with Organization schema on its website but no LinkedIn company page, no Crunchbase profile, no industry directory listings, and no press mentions has a single self-declared entity. AI systems weight self-declared information lower than independently confirmed information — the same principle behind why Wikipedia requires independent sources for notability.

In our work, we’ve found that brands appearing consistently across at least eight independent platforms (website, LinkedIn, Crunchbase or equivalent, two industry directories, one review platform, one press mention, and one social profile) have measurably stronger AI presence than brands with equivalent content quality but fewer cross-platform signals.

Content Structure for Citation

AI systems cite passages, not pages. This is perhaps the most actionable insight in AI search optimization. When ChatGPT or Perplexity cites your website, it’s not citing the page as a whole — it’s extracting a specific passage that directly answers the user’s query. This means individual paragraphs need to function as standalone answers.

The patterns that consistently earn citations:

- Direct-answer paragraphs: Paragraphs that open with a clear statement answering a specific question, followed by supporting evidence. “Entity SEO is the practice of optimizing how search engines and AI systems recognize and categorize your brand as a distinct entity…” works. A paragraph that takes 200 words to arrive at the definition doesn’t.

- Comparison tables with real data: Tables comparing tools, services, or approaches with specific attributes. AI systems extract tabular data efficiently and cite tables at a high rate.

- FAQ sections matching real queries: Not corporate FAQ sections (“How do I contact support?”) but questions matching actual search behavior (“Does schema markup affect AI search visibility?”). Use the language people actually use — check Reddit, People Also Ask boxes, and forum threads.

- Statistics with attribution: “According to a 2025 Gartner report, 47% of enterprise decision-makers used AI platforms for vendor research” is citable. “Many businesses are turning to AI” is not.

Source Authority Signals

Being mentioned on authoritative platforms compounds your entity strength and creates additional retrieval targets. The sources that carry the most weight in our citation analysis: industry-specific directories (Clutch, G2, Capterra for agencies; industry equivalents for other verticals), professional publications, news sites that maintain editorial standards, and .edu or .gov references where applicable.

Press coverage matters, but not all press is equal. A feature article in a trade publication where a journalist independently discusses your work carries significantly more entity signal than a self-published press release on a wire service. Press releases have value for other reasons — syndication, backlinks, announcement visibility — but their entity signal for AI systems is limited because AI models can identify the difference between earned media and self-published distribution.

What Doesn’t Work

Some tactics that worked in traditional SEO are neutral or counterproductive for AI visibility:

- Keyword stuffing: AI systems process semantic meaning, not keyword density. Awkwardly inserting “best AI search optimization agency” twelve times on a page doesn’t improve your citation probability — it may decrease it because AI systems associate keyword stuffing with low-quality sources.

- Thin content across many pages: Creating fifty shallow pages targeting keyword variations is a traditional SEO play that AI systems handle differently. One comprehensive page that thoroughly addresses a topic gets cited. Fifty thin pages that each partially address it get none.

- Vague authority claims: “We are a leading provider of innovative solutions” is the exact kind of language AI systems learn to ignore. Specific claims with evidence — “We have completed 1,200 WordPress builds across 14 industries since 2018” — create the kind of concrete entity signals that AI can reference.

The Measurement Problem Nobody Talks About

There is no Google Search Console for ChatGPT. There is no Analytics dashboard that shows you how many times Perplexity recommended your brand. And anyone telling you they have comprehensive measurement for AI search visibility is overselling their capabilities.

This is the most honest assessment we can give: measurement in AI search optimization is immature. It’s the weakest part of the discipline. And it’s the main reason that Fishkin’s ROI skepticism has teeth — if you can’t measure impact, how do you justify investment?

What You Can Measure Today

Direct AI referral traffic in Google Analytics 4: GA4 can identify traffic from chat.openai.com, perplexity.ai, and other AI platforms. Filter your referral sources. The numbers will likely be small relative to organic search, but they are real and growing. Setting up this tracking takes minutes and gives you a baseline to measure against.

Brand mention monitoring: Tools like Otterly.ai, Peec AI, and Knowatoa allow you to run specific queries across ChatGPT, Perplexity, and Google AI Overviews and check whether your brand appears in the responses. These tools have limitations — AI responses vary by session, location, and model version — but they provide directional data. At Taptwice Global, we run periodic citation audits across platforms to track brand visibility trends for our clients.

Branded search volume as a proxy: If AI is recommending your brand, some percentage of users will Google your brand name afterward. Rising branded search volume (trackable via Google Search Console, Google Trends, and tools like Semrush or Ahrefs) can serve as a proxy for AI recommendation activity — though it conflates AI-driven awareness with other brand-building activities.

Passage-level citation tracking: Some emerging tools track which specific pages and passages on your site are being cited by AI platforms. This is the most actionable form of measurement because it tells you what content format and structure is working. But these tools are young and their data coverage is incomplete.

What You Can’t Measure Yet

You cannot measure how many times an AI system recommended your brand from training data (not web browsing) because there is no callback or referral signal for those interactions. You cannot reliably measure the total volume of AI queries relevant to your business. You cannot track the conversion path from “AI mentioned my brand” to “customer signed a contract” with the precision you’re used to in paid media or even organic search.

These limitations are real, and they should inform your investment decisions. AI search optimization should not replace your measurable marketing channels. It should supplement them, with the understanding that you’re investing partly in a future channel whose measurement infrastructure is still being built.

A Reasonable Measurement Framework for Now

Track three things monthly: AI referral traffic in GA4, branded search volume trends, and periodic brand mention audits across ChatGPT, Perplexity, and Google AI Overviews. Compare these numbers quarter over quarter. You won’t get attribution-level precision, but you’ll get directional signals that tell you whether your efforts are working. That’s the best anyone can honestly offer right now.

Frequently Asked Questions

Is AEO just hype or actually worth investing in?

It depends on your market. If your buyers are using AI platforms to research vendors or products — and in B2B SaaS, professional services, and e-commerce, they increasingly are — then being absent from AI recommendations has a real cost. The hype part is the packaging: agencies selling “revolutionary AEO transformation” at premium prices for work that largely consists of good SEO plus entity optimization. The substance underneath the hype is real. Start with Bing indexing, entity consistency, and structured content. Those investments pay off regardless of how the AI search landscape evolves.

Should I hire a separate AEO agency or can my SEO agency handle it?

Most competent SEO agencies can handle 70–80% of AI search optimization because the foundational work — technical SEO, content quality, schema markup — is shared. Where you may need specialized help is in entity strategy (auditing and consolidating your brand presence across platforms), AI citation analysis (understanding how AI platforms specifically select and cite sources), and training data influence (building the kind of web presence that affects future model training). Ask your SEO agency directly: “Do you monitor our brand’s visibility in ChatGPT and Perplexity? Do you optimize for Bing?” If the answer is no to both, you have a gap.

Does llms.txt actually help with AI visibility?

The llms.txt proposal (a file placed at your domain root, similar to robots.txt, that provides LLMs with structured information about your site) is still experimental. There is no confirmed evidence that any major AI platform currently reads or uses llms.txt files for source selection or entity recognition. It may become a standard in the future, and implementing it takes minimal effort, so there’s no harm in adding one. But don’t treat it as a meaningful optimization — it’s a speculative bet with near-zero implementation cost. Focus your energy on the signals that demonstrably work: schema markup, entity consistency, and content structure.

How do I check if my brand appears in ChatGPT?

Open ChatGPT and ask it the kinds of questions your customers would ask when researching your product or service category. Don’t ask “Do you know [Brand Name]?” — that tests name recognition, not recommendation. Ask “What are the best [your service] providers in [your market]?” or “Which companies should I consider for [specific need]?” or “Compare [your brand] vs [competitor].” Run these queries with web browsing both enabled and disabled. Results with browsing disabled show what the model knows from training data; results with browsing enabled show what it can find live. Document the results and repeat monthly to track changes. Tools like Otterly.ai can automate this process.

Are press releases useful for AI search visibility?

Partially. Press releases distributed through wire services (GlobeNewsWire, PR Newswire, BusinessWire) create web presence and backlinks that contribute to entity signals over time. However, AI systems can distinguish between self-published press releases and earned editorial coverage. A press release announcing your company’s new product creates a data point; a journalist at a trade publication independently writing about your product creates an authority signal. Both have value, but they’re not equivalent for AI visibility. Press releases are most useful for AI search when they get picked up and rewritten by editorial outlets — the pickup creates earned media signals that AI weighs more heavily than the original release.

Is it too early for small businesses to invest in this?

Not if you frame the investment correctly. The foundational work — making sure your Bing index is clean, your schema markup is comprehensive, your brand information is consistent across platforms, and your content includes direct-answer passages — benefits your traditional SEO and your AI visibility simultaneously. That’s not speculative investment; it’s good marketing hygiene with a bonus channel. What would be premature for most small businesses is hiring a dedicated AEO agency at premium rates or rebuilding your entire content strategy around AI optimization. Start with the dual-benefit work, measure AI referral traffic and brand mentions, and scale investment as the data justifies it.